AI-powered decision making offers speed and consistency when data are rich and objectives clear, but it introduces risk when inputs drift or biases persist. Its value hinges on governance, traceability, and independent checks to prevent opaque surrogate biases. The smarter path blends human oversight with rigorous risk controls, ensuring accountability and ethical alignment. The question remains: can robust frameworks curb unseen risks enough to sustain confidence as capabilities scale?

What AI-Powered Decisions Do Best (and Why It Works)

AI-powered decisions excel where data are rich, signals are well-defined, and stakes hinge on timely, repeatable judgment. In such contexts, models outperform manual processes by optimizing speed, consistency, and resource use. They succeed where clear objectives and stable inputs prevail, yet watchful governance matters: ethics drift and data drift can erode reliability if monitoring lags, prompting prudent, adaptive controls.

Where Automation Falls Short: Bias, Transparency, and Accountability

Bias, transparency, and accountability reveal the limits of automation even when data and models perform well. The analysis centers on bias pitfalls that persist despite robust datasets, and on transparency gaps that obscure decision rationale. Practically, organizations face risk from unseen surrogate biases, misaligned incentives, and opaque feature influence. Detecting, auditing, and documenting these gaps is essential for responsible, freedom-supporting adoption.

Balancing Humans and Machines: Governance, Oversight, and Roles

Balancing humans and machines requires a clear governance framework that specifies roles, responsibilities, and escalation paths. Governance constructs, measured by transparent metrics and independent audits, frame decision authority, risks, and remedy pathways.

Human governance emphasizes accountability for outcomes, while oversight accountability ensures continuous verification.

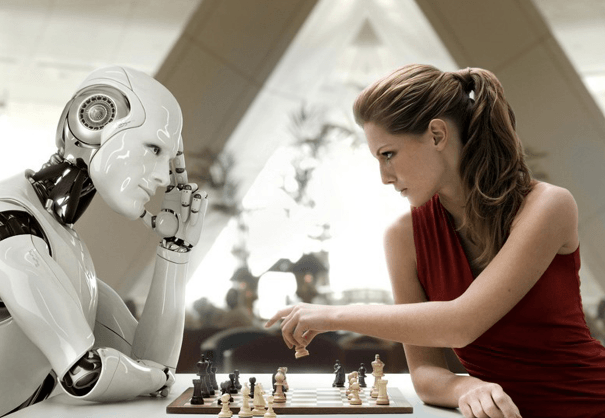

A human in the loop maintains critical checks, balancing autonomy with ethical constraints and freedom-driven safeguards.

Practical Frameworks to Build Safer, Smarter AI Decisions

Practical frameworks for safer, smarter AI decisions center on structured governance, rigorous risk assessment, and repeatable processes that align model behavior with organizational objectives.

The approach emphasizes transparent measurement, ongoing validation, and independent audits to mitigate algorithmic biases and preserve trust.

Data provenance and lineage enable traceable decisions, supporting accountability while enabling freedom through accountable experimentation and disciplined, ethical deployment.

Frequently Asked Questions

How Do AI Decisions Impact Long-Term Organizational Culture and Trust?

AI decisions influence long-term culture by shaping cultural alignment and signaling governance standards; however, they risk trust erosion if transparency, accountability, and human oversight are neglected, requiring pragmatic, data-driven controls that preserve freedom while reducing uncertainty.

Can AI Replace Expert Intuition in High-Uncertainty Scenarios?

67% of leaders report AI intuition supplements—never replaces—expert judgment in high-uncertainty scenarios. The assessment notes uncertainty risk remains, but effective decision governance and stakeholder ethics guide adoption, aligning AI with human insight and freedom-seeking organizational aims.

What Are the Hidden Costs of Deploying AI Decision Systems?

Hidden costs of deploying AI decision systems include governance burden and data quality risks; a cost-benefit, risk-aware calculation is essential, ensuring data governance remains proportional to strategic value while preserving organizational freedom and adaptability.

How Should AI Decisions Handle Conflicting Stakeholder Values?

Like a tense tightrope, AI decisions should curb conflict and seek stakeholder alignment through transparent criteria, documented trade-offs, and participatory governance. The approach emphasizes conflict resolution, stakeholder alignment, data-driven risk assessment, and freedom-respecting policy framing.

See also: AI Opponents in Modern Gaming

What Metrics Capture Downstream Societal Impacts of AI Decisions?

The metrics capture downstream societal impacts by tracking governance indicators and equity auditing outcomes; they quantify risk, fairness, and long-term effects, enabling transparent decisions. Metrics governance and equity auditing frameworks provide data-driven, pragmatic insight for freedom-loving stakeholders.

Conclusion

In the end, the data tell a cautious tale. AI decisions can accelerate insight and precision when signals are clear and lineage unbroken, yet drift and bias lurk in every corner. The balance hinges on governance that is visible, audits that substantiate, and human checks that remain indispensable. As outcomes tighten, reliance must be tempered with transparency, accountability, and iterative safeguards—lest smarter choices become riskier ones, hidden in plain sight until a costly misstep reveals the truth.