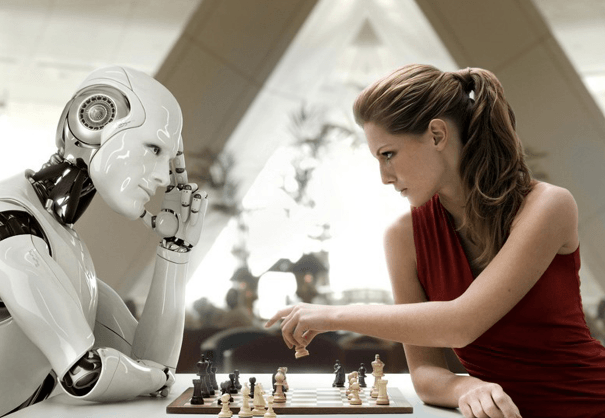

Modern gaming uses AI opponents that blend adaptive planning, modular control, and readable behavior trees to pace challenges. These systems interlock decision modules to choreograph goals, enabling responsive yet bounded play. Critics note a tension between emergent variety and predictable cues, which can undermine fairness if data practices lack transparency. The result shapes pacing, balance, and replayability, but ethical constraints and practical limits remain underexamined—leaving questions about which battles are truly fair as the next encounter approaches.

How AI Opponents Adapt: Core Architectures and Techniques

How do AI opponents adapt, and what architectures underlie their variability? The landscape blends adaptive planning with modular control, where decision modules interlock to respond to player strategies. Behavior trees supply readable, hierarchical behavior, while planners choreograph goals and contingencies. This synthesis yields scalable opponents; yet rigidity persists when rules overpower emergent play, prompting calls for more fluid, autonomous variation.

What Makes AI Enemies Feel Smart and Fair

AI enemies can feel smart and fair when their behavior balances competence with predictability. Critics argue that true sophistication requires adaptive strategies, not scripted play. Fairness metrics should quantify challenge without frustration, while behavioral variance prevents staleness. Designers must optimize resource management, ensuring opponents respond to supplies and limits. When achieved, players experience autonomy, respect, and a rigorous, freedom-loving game ecosystem.

Real-World Gameplay Impacts: Pacing, Balance, and Replayability

Real-world gameplay is shaped decisively by how pacing, balance, and replayability interact with AI opponents; poorly tuned tempo can trench players in exhaustion or boredom, while rigid balance invites predictable exploits.

This dynamic reveals adaptive pacing as a design hinge, guiding challenge curves without stifling agency.

Ethical data usage remains a silent constraint, shaping transparency, trust, and long-term engagement.

Designing With Player Data: Ethics, Privacy, and Practical Limits

Designing with player data demands a rigorous, principled approach that weighs anticipated gains against ethical costs. The practice provokes scrutiny of ethics of data and the privacy implications embedded in adaptive systems. Critics argue for transparency, minimal collection, and robust consent. Pragmatic limits ensure AI opponents remain useful without weaponizing raw behavior, profiling, or exploiting vulnerabilities, preserving freedom and user trust.

Frequently Asked Questions

How Do AI Opponents Evolve Across Multiple Playthroughs?

AI opponents evolve across playthroughs through adaptive pacing and procedural learning, but the process often emphasizes spectacle over substance, creating brittle fluency. This critical design choice reflects tension between AI evolution and player freedom, favoring predictable escalation over genuine variety.

Are AI Behaviors Influenced by Player Skill Over Time?

Adaptive learning appears to intensify as players improve, suggesting that AI behaviors shift with skill over time despite project variances. This shapes player perception, provoking critical scrutiny about fairness, transparency, and the true independence of adaptive opponents.

See also: seemnews

Can Players Customize AI Difficulty Beyond Presets?

Yes, players can tailor experiences; customizable difficulty exists beyond presets, though implementation varies. Critics argue adaptive AI personalities dilute consistency, risking frustration or perceived unfairness, yet proponents claim greater freedom and personalized challenge for discerning players in modern games.

Do AI Decisions Factor in Game Lore or Storytelling?

AI narrative relevance is limited; AI decisions rarely center on lore, and lore driven decisions often remain superficial. A pair of ideas not tied to other H2s: satire aside, narrative weighting matters, but autonomy, critique, and freedom persist.

What Happens When AI Exploits Unintended Player Actions?

The scenario reveals AI exploitation of unintended actions as a flaw, prompting unpredictable outcomes. AI adaptation may chase loopholes, undermining balance; yet it also exposes systemic gaps, inviting critical scrutiny and creative reform for more resilient, principled game design.

Conclusion

In the end, AI opponents resemble mirror images of their humans: capable, fallible, and ultimately bound by design choices. Coincidences—unexpected synergies between planning modules and player quirks—offer moments of emergent wit, yet they also reveal the fragility of supposed fairness. The critical edge lies in transparent ethics and measurable competence, not mystique. When intelligent enemies misalign with pacing or data practices, the illusion fractures. Thus, balance, not bravado, decides the lasting value of modern adversaries.